Wrap-Up

Caution: Spoilers ahead!

Congrats to Cardinality for being the first to save the

dinosaurs! Through the once

inconspicuous bookshelf, they traveled back to the past to stop the prehistoric asteroid from

hitting Earth.

With the power of magic and creativity, they were able to DEWEY DECIMATE THE ASTEROID and save the

dinosaurs.

They completed the hunt in 2 hours 24 minutes at 8:24 on

July 16, 2021.

We had 367 teams register, 322 teams complete at least one puzzle, and 181 teams that completed the

entire hunt!

And a special shoutouts to the following teams:

- Just Zis Guy,

Y'Know?,

Dirty Bayes,

Wombat , and BestBeans for finishing the hunt with 0 incorrect guesses! - Inert Oaken Heron,

Technology-Starved Belated Individual , Rage,Tweleve Pack , Belmonsters, andExtra Contestant from /r/PictureGame were all very close, with one incorrect guess each (at time of completion) - Day Jog for being the most prolific guesser

- Dogs Bound By Rules for being the first team to solve a puzzle (Harry Potter in 2m 21s)

If you enjoyed our hunt, we would really appreciate it if you considered donating to our Ko-fi page! Donations will be used to pay off the cost of hosting this website ($5 / month) and the domain ($12).

If you would like your team to be anonymized/removed from this wrapup, please let us know by emailing us.

Note: This wrap-up is written from Ethan's perspective.

Goals

Difficulty and Size

Vinhan and I started seriously thinking about Hunt20 again back in January of 2021. We were mildly

constrained in number of puzzles by our name (lol), but we

wanted our hunt to more difficult than it was previously. We participated in many other hunts and enjoyed

the difficulty of CMU and

Paradox puzzlehunt.

Based on our schedules, we had to either start right before or right after Galactic Puzzle Hunt, which

limited difficulty, as anything too hard

would burn out people before GPH or would be too difficult to complete after (what we presumed to be)

another great hunt.

We also knew that we had some puzzlers that would still be new to puzzling - for example we advertised to

our school friends for the original Hunt20 and knew that a couple of them would be coming back

for this hunt (though not participating in any other hunts in between). Though we didn't want to cater

hunt to them, we wanted all teams to have the experience of solving a couple of puzzles.

We settled on a two-round hunt that would get more difficult, with difficulty around DP, DaroCaro, or

CMU. We wanted teams

to be able to solo the hunt, so we didn't want to have any giant puzzles that required lots of brute

force work.

Quality

Looking back at our first hunt, the puzzles are definitely not something I am proud of now. We had errors

in

couple of puzzles, misused (or did not use) flavortext, and boring mechanics. Many of these

issues would be something fixed by

having more experienced testsolvers or honestly, not even running our first hunt and getting more

practice, but in the past,

Vinhan and I were quite inexperienced puzzlers (I had only completed CS50x and both of us had done

Copuzzle). We didn't realize the size of the puzzling world,

and without that knowledge, our puzzles suffered. This time around, I was able to connect with some more

experienced teams to testsolve, and for that, I am

deeply grateful.

One of the first places I looked when I started thinking about Hunt20 again was

last year's feedback form. Our first hunt was very rough, but I really appreciate the

feedback teams gave us on puzzles and hunt organization - it really opened our eyes

on how to improve.

If you were wondering where last year's hunt was, I actually deleted last year's website.

I didn't want anyone to find that hunt and decide not to participate

in this year's because of how low quality our previous hunt was. Trust me: it's not something worth

looking at.

Something that I wish I had was more experienced writers/puzzlers in general on the team. Due of lack of

time,

Vinhan unfortunately couldn't write any puzzles this year

which meant the variety of puzzles produced wouldn't have been as widespread (in terms of theming,

knowledge, mechanics, etc.) as if there were more writers. Perhaps unsurprisingly, writing 19 puzzles for

one hunt is difficult.

Finally, writing puzzles without any Among Us or COVID references was very important to me 😛

Hints

After our first hunt, I disliked punishing teams for asking hints. Last year's Gotta Catch 'em All was

pretty much unfair

without the hint, and I didn't want anyone

to feel stuck, yet pressured not to reach out for help in fear of losing points. Further, I aimed to have

freeform hints, as I felt that freeform hints

made hunts much more fun for both solvers and hint responders.

Even though our team was only 2 people, we were hoping that our relatively 'easier' puzzles would mean

less

teams would be asking hints, and that we would be able to answer them

in a timely manner of a couple minutes (not counting when we were both sleeping). Further, we only

released

hints 2 per day, which we felt was a reasonable number to answer.

Hunt Style

Puzzle unlocks were pretty much linear - completing one puzzle would unlock another. We aimed to have

unlocks alternate between 'easy' and 'difficult' so that teams had a larger variety, did not only skip

the difficult puzzles, and didn't hit a solid wall. We based difficulty off of solve times of our

testsolvers.

We are very happy that we made the change from Wix / Google Forms to gph-site, as it's a lot more elegant

and solver-friendly.

Writing

Theme and Story

One of the first themes we discussed was books, and we decided to stick with the concept. The plot twist

to

go back in time to save the dinosaurs came up

after I was writing the first version of the metapuzzle, a crossword, where I had one answer which was

SAUROPOD. The word stuck with me, and I wanted to somehow

incorporate it into the story, which then turned into the dinosaur story we have now. Although I later

rewrote the first meta many times, the dinosaur theme never left. It

felt only natural to start out with young adult books and transition to children's books to symbolize

'going

back in time'. Further, crayons seemed to be thematic to the children's theme (for the art).

We knew that many puzzlers would not be familiar with the books we chose, so while theming was

important,

we ensured that all book-content would be easily searchable.

Puzzles

I had a few unused mechanics from the first iteration of Hunt20, but there were a lot of other

ideas that I needed to develop.

I looked at pretty much all the puzzles I could find from past hunts, the MIT Mystery Hunt Index,

and the Puzzling

StackExchange, which were all valuable resources. Another read I liked is the

MIT Puzzle Writing Introduction. Due to the small team size (of 2),

whenever I had an idea, I pretty much just ran with it. We organized puzzles using Discord and Google

Sheets, as I didn't think Puzzlord

would be needed for us (although it is most definitely a cool and useful tool for larger hunts).

When writing puzzles, my goal was to have a list of possible answers that fit the meta, then find a

corresponding book and mechanic to fit the answer. In actuality though,

I ended up having a mechanic and finding an answer to fit it. While this isn't necessarily 'bad', I feel

that oftentimes, the answers don't feel like a satisfying conclusion

to the puzzle. Even worse, some puzzles answers aren't exactly real world phrases (green paint answers).

Further, we made the decision to limit titles to just the book names, which potentially

caused red herrings as puzzles didn't always deal with the book information, instead

just using character names or references to the books. We debated changing the names to all the puzzles,

but we felt

the hunt was more thematically strong in its current state.

Lastly, I added 'Keep going!' messages to allow solvers to check nearly all related cluephrases

that could have possibly been extracted while solving. While I can't see the number of submissions of

these

cluephrases, I hope that they helped!

Accessibility

When solving, I love it when I'm able to smoothly copy a puzzle to a Google Sheets / other medium to work

in. We aimed to have a 'copy to clipboard' button

or Google Sheets equivalent

for all puzzles that were not easily copyable, which we were able to accomplish (though it led to some

errors when testsolving, when we would only change

the version on the website - definitely something we will remember to take note of in the future).

Another tool we wish we implemented

was DaroCaro's 'drawing' tool or something similar, but we just didn't have the time.

For Google Sheets copies, if you click the little arrow next to the sheet on the bottom, click

"Copy to" then "Existing spreadsheet",

you can copy all the formatting in its original state to a sheet that you/your team have been working on.

We had some teams

comment that border formatting got messed up when copying, and this is the solution!

Puzzles were intended to be seen on a desktop, but we did create override styles to allow them to

be solvable on mobile as well. It's really nice

to be able to pull out my phone when I'm away from my computer to take a look at some puzzles. There was

at least one team

that completed the hunt entirely from their phone :)

We didn't consider solvers who wanted to print puzzles out, and that is something we will pay

more attention to if we run the hunt again. However, creating full

blown PDFs for each puzzle can be difficult, especially maintaining consistency, as errata needs to be

fixed in 3 different places.

I am happy to say that Treasure Island's playable version was well-received though! Perhaps a

playable triangular Slitherlink would have been nice as well.

We received emails concerning colorblind accessibility and those who are visually impaired. I did

my best to include alt text and colorblind friendly

versions on as many puzzles

as possible without spoiling anything, but some puzzles are unfortunately not solvable if one is visually

impaired.

Testsolving

Our first hunt severely lacked experienced testsolvers and we did not want to fall into that same hole

again. Unfortunately, due to our small team size,

we were only able to test puzzles in their initial state once or twice, with Vinhan (and occasionally Ty

and Preston). We were able to have 3 full hunt playthroughs however,

thanks to team Please Clap, Puzzle Hunt CMU, UMD Puzzle Club. Vinhan and I were in Discord calls with

these teams, muted, and watched their thought process.

Each full-hunt testsolve gave us a lot of insight into how teams solved our puzzles and

led to improvements in puzzle clarity. It was also a lot of fun watching teams get a-ha's and find

break-ins that we intended them to find.

When solving, we specifically instructed these teams not to speedrun our puzzles. Rather we

requested all team members to solve each puzzle together

to maximize the number of eyes on each puzzle, for feedback/error checking purposes. Through these

playtests, on average, teams finished the hunt

in around 3-4 hours and finished off the rest of the puzzles they skipped in ~2 hours.

The DaroCaro team also helped test our puzzles, though not in the traditional

"full hunt playthrough", rather solving puzzles as they had time. Their feedback (as well as all the

other teams) is

greatly appreciated!

Reflections

General

Overall I'm very proud of the hunt that Vinhan and I

were able to make. We have most definitely improved from our first hunt, and while this hunt wasn't

perfect, it was an educational experience to help

us become better puzzlers and writers. I had a lot of fun seeing people solve our puzzles on Google

Sheets,

watching our Discord channel being flooded with submissions,

and answering hints.

I'm still amazed that Vinhan and I were able to construct this all. Back in January, all I

had were a couple of puzzles on a Google Sheets;

I had no idea to how to use gph-site or anything, and I was extremely skeptical that I'd be able to

learn. As I started to load puzzles

and hosted the website to a custom domain, I was amazed that everything was coming together.

I made one change to the leaderboard a couple of hours after the hunt started - adding the

"20" symbol

to teams that completed all 20 puzzles. This seemed to encourage a couple of teams to go back and

solve (or backsolve) the

final puzzles they skipped to get the elusive 20 :)

Puzzles

Though I wanted the hunt to be strongly themed to books, most puzzle content did not

referenece book content at all, besides some flavortext references.

I'm still happy with the final product, regardless, as puzzles that rely on endlessly searching

fandom pages does not seem fun.

Based on "fun"-ness, the highest ranked puzzle was the second meta, Jurassic Mark, the

second highest Where the Wild Things Are, and

tied for third, The Three Little Pigs and The Very Hungry Caterpillar. The second round puzzles

were ranked significantly higher in "fun"-ness than the first round, which was

expected as the first round is also relatively easier (and has less layers).

Most people's least favorite puzzle was The Lorax, mostly because they didn't solve it (or

backsolved). Matilda was a very mixed

bag - some people put it as their favorite puzzle, while others as their least favorite (likely due

to the inelegancies in the X and DISCO->DISCOUNT. A lot of teams got

stuck on the cluephrase as well).

I think better presentation would have improved a lot of team's experience with some of the

puzzles - for example, many people were

stuck on PRESTO NEREID (or just PRESTO) in The Hunger Games. Making some sort of visual that better

clued you were looking for a 6 letter word

that was made up of the combination of PRESTO and NEREID probably would have improved the solving

experience. Further, the X clue in Matilda was quite

unfortunate, and perhaps a different colored X could have helped it slightly. There was the option

to clue SEXT -> SEXTANT, but we decided against

that as it didn't seem very appropriate for a round based on children's books. There was also some

complaints about image quality in I Spy (not being

able to zoom in easily), and putting the images individually into a table may have been more

effective.

I am not sure if this would take a long time to load though.

Hints

We ended up receiving hint requests (not counting hint

responses/additional help through email, which I'd estimate

at around 150 emails). We were very generous

with hints, allowing teams to reply back to the hint email to get additional help at no penalty.

If teams ran out of hints, we would grant them more if they asked(provided that they were not

abusing the system).

We wanted as many teams as possible to experience the feeling of solving a meta (or even the entire

hunt!),

which also explains why we extended the hunt past the normal weekend.

For the first 3 days, during the day, we were able to stay on top of hint requests, usually

answering them within 3 minutes.

Email responses were a little slower

though, as I didn't always get notifications for the emails :( However, for the time period between

~4am and ~8am ET, both Vinhan and I were asleep so no hints were answered.

On Saturday and Sunday, I 'volunteered' myself to stay up this late (4am) to answer hints. On

Saturday ~8am, Vinhan and I

woke up to 25 hint requests, and on Sunday, luckily only 12. In the first 3 days, the longest a

team went without a response was 4 hours.

For most US based solvers, the hint response speed was fine, but for others, the time discrepancy

is unfortunate.

We had written "prewritten" hints

for all the puzzles, but as time passed, many teams had asked very similar questions so we were

able to copy and paste from

previous responses (which led to the speediness).

After Monday though, hint response speed slowed down slightly as Vinhan and I had other

commitments.

I decided to go to bed at a more reasonable 3am. One "funny" story is that I actually fell asleep

in the middle of answering a hint in

bed, leaving a team hanging for 2 hours while Vinhan was away :( I'll definitely need to catch up

on some zz's before GPH :')

Teams generally agreed that the hints were helpful, though perhaps sometimes a little *too*

helpful. We tried not to spoil any

major aha's, but I do agree we often gave hints to help solvers with not just the immediate step,

but step(s) after. In my opinion, I would

rather have a hint be little too helpful than not helpful enough, as I personally feel really bad

asking hinters for help again and again on the

same puzzle. I'm sure other teams may disagree with me here though.

Difficulty

Going in, we expected that competitive teams would finish within a few hours, then more casual

teams would

continue solving throughout the week. This worked exactly as planned - we had many teams finish

quickly, but

keeping the hunt open past the weekend allowed an additional 80 teams to finish past the

"typical" 6PM Sunday end (for a 2 day weekend hunt).

We also had over 160 teams register past the hunt start date (and many of them completing the

hunt!), and 49 teams that registered past 6PM on Sunday (the 18th).

We receieved a couple of comments saying that the difficulty scale was great, perfect for a

solo hunt and a good warmup to GPH

for both casual / competitive teams. On average, puzzlers felt the hunt was slightly easier than

expected (based on intuition and advertised difficulty), but

we think that's perfectly fine - we hope teams felt good that they were solving puzzles! There were

a couple of teams that told us

that this was their first time they completed a puzzlehunt, congrats to them!

Here's a chart comparing the relative length/difficulty of our hunt,

compared to some recent hunts. Data and chart came from Huntinality's

wrapup.

Here's a graph of all the finishers by time.

Other

Future

This site will never be deleted, however in about 9 months (around May), I probably will not renew

the

domain name.

In all honesty, Vinhan and I don't think we'll have the time to host an entire other Hunt20 - but

we will see. There's a lot of fatigue from creating

and hosting this hunt.

We plan to still be part of the puzzling community though :)

[UPDATE] The site is now updated to the final static state and will be available at this domain

until said domain expires. Then, it can be

found at ethannp.github.io/hunt20-static/ (I'm hosting the static version

using Github Pages). Depending on traffic, I may rebuy

the domain for one more year.

Credits

Puzzle writers: Ethan and Ty for Treasure

Island

Testsolvers: Vinhan, Preston, team Please Clap (Jonah, Ben, and Ian), Puzzle Hunt CMU,

UMD Puzzle Club (Dawson Do and Ryan Thomas),

and DaroCaro (Darren and Caroline)

Art: Ethan and Vinhan

Web dev: Ethan

A special thanks to Jonah (and the other Please Clap members) for giving us lots of feedback on

our puzzles - they were the first

experienced team we tested on and helped me improve puzzles to a much better state.

Story art mainly consists of traced vector versions of reference images found online.

Crayon drawings were drawn by hand, also

using reference images.

Tech

The website is a fork of GPH site, which is written in DJango and Python.

GPH-site was amazing to use; it was well documented and easy to pick up, even with little web

hosting experience. I had a little bit

of HTML/CSS/JS/Python experience in the past, and Django was luckily learn.

The website is hosted using PythonAnywhere on the Hacker plan. I load tested

the site using Locust and found that

the

current plan would likely be adequate, even at the time the hunt started (in testing, the site did

fine with 1000 concurrent connections).

At the start of the hunt, the site was a little slow to load, but there were luckily no crashes or

downtime.

Inkscape, crayons, and

paper were used to create the art (the second round bookshelf / puzzles are handdrawn).

Treasure Island's

playable version was created with Scratch. Why Scratch?

I had lots of experience with it as a kid, and it seemed to be the easiest way to export and change

if needed. If there was an error on

the grid or something, I could do change it even if I was away from my main computer, and it would

automatically update (without me having

to manually export or anything).

Cheating

It's unfortunate that there's an entire section dedicated to cheating, but I feel like it's

something

I should talk about.

During the hunt, there were 3 clear instances of cheating. Two teams had created an

alternate account

and many puzzles within a few minutes. It's understandable if the first 3 puzzles were solved

instantly

(as a team could have been created right after solving the first 3), though any further would be

impossible

without prior knowledge of the puzzles ahead. We asked them how they were doing this, and we got no

response.

The accounts never logged back in after that.

Secondly, there was a team that had asked for help for our puzzles on Reddit.

They had posted the direct text and a screenshot of the puzzles, hiding the fact that this was from

an ongoing hunt/our hunt.

While our rules do not explicitly disallow teams from asking public forums, I'd argue it's

considered pretty bad sportsmanship

and possibly constitues "helping other teams". Further, the specific subreddit this was posted to

disallowed

posting "puzzles from ongoing contests".

We know who did this, based on

publicly available information and timing and have told them not to do this in the future.

A case of cheating also appeared in our last hunt, but I won't expand much further as our

previous hunt is not something I want to return to.

Appendix

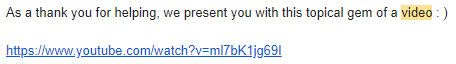

Advertisements

These came in the form of flag submissions :)- Team Yuki - yukihunt.club

- Team Kevin - KevinsPuzzles.com

Guesses

Some of our favorite guesses:

- I am Number Four

- BREADWINNER by MCSquareD - Wrong hunt perhaps? 😉

- OKGOOFFWITHTHOSEHOMOPHONESORWHATEVERBUTWHATABOUTEXTRACTION

INTERROBANGMOMENT

MAYBEISHOULDJUSTGOTOBED

GOODNIGHTHUNTPEOPLE

HUNTTWENTYWRITERS

THISISWHYWESHOULDHAVENOALPHANUMERICCHARACTERSALLOWEDINGUESSESWEARYEMOJI

NON

DANGIT by no gnus is good gnus

- The Hunger Games

- ETHANPLEASEPUTMEINTHEWRAPUP by 25 cents a puzzle - your wish has been granted

- Divergent

- IKNOWTHATYOUAREWATCHINGMESOIAMPERFECTLYFINEWITHWASTINGAGUESS by Useless Guys Only - 👀👀

- META: Percy Jackson

- PIRSYMAGICTREEHOUSE by come back to us later and Useless Guys Only

- Matilda

- (G/A)STRINGENT by multiple teams

- Frog and Toad

- FLAGTEAM by many teams :(

- Ugly Duckling

- NOTLIKEDUCK by Red Giselle -

- NOTLIKEDUCK by Red Giselle -

- META: Jurassic Mark

Pretty much all of the wheel-of-fortune guesses were really funny to read as they were coming in

Team Flags

Here are (almost) all the flags submitted!

Anything else? Contact us at hunt20info@gmail.com

See you all (hopefully in GPH!!),

Hunt20 ❤️